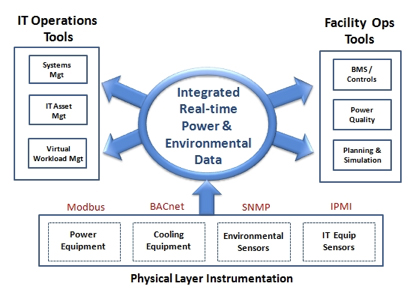

TL;DR: Data center monitoring has been difficult to deploy because facilities equipment vendors have used proprietary, poorly documented management protocols, creating vendor lock-in. While IT gear has standardized on protocols like SNMP, facilities systems (power, cooling) have resisted interoperability. Modern DCIM platforms and wireless sensor systems now make integrated monitoring across IT and facilities equipment practical and easy to deploy across distributed sites.

Why Has Data Center Management Been Treated as an Art?

Data center management has historically been considered an “art” rather than a science. Emotions, previous experiences, and personal priorities have driven investment decisions within the data center, with the approach varying widely from company to company. Various technologies sound promising at first, but how they play out over time depends on capabilities, costs, timing, and a degree of chance.

This context is important when considering why it has been so hard to deploy data center monitoring that includes both IT gear and facilities equipment within standard IT planning and performance processes.

What Makes Facilities Equipment Monitoring So Difficult?

IT gear vendors have done a fairly good job of standardizing management protocols and access mechanisms. However, there have been far too many incompatible facilities equipment management systems over the years. Many remain proprietary, undocumented, or poorly documented.

Equipment manufacturers have been in no hurry to make their devices communicate with the outside world. “Their equipment, their tools” has been the way of life for facilities gear vendors, a practice best described as “vendor lock.” Ironically, these facilities subsystems (power and cooling) are arguably the most mission-critical part of running IT cost-effectively.

Two Barriers to Unified Data Center Monitoring

Two key factors have historically blocked effective data center monitoring:

- Data center design as art, not science. Depending on leadership, differing levels of emphasis are placed on different technology areas, resulting in inconsistent monitoring strategies.

- Monitoring perceived as difficult and low-impact. Data center monitoring has been historically viewed as hard to deploy across the full range of devices, with the resulting limited reports seen as insignificant to the bigger picture.

How Has the Business Case for Monitoring Changed?

Times have changed. Senior leadership across corporations is now asking IT organizations to behave more like a science. Accountability, effectiveness, ROI, and efficiency are all part of the new daily mantra. Management needs repeatable, defensible investments that can withstand scrutiny and adapt to change.

Additionally, with the price of energy over three years now surpassing the initial acquisition cost of the same gear, the most innovative data center managers are taking a fresh look at deploying active, strategic data center monitoring as part of their baseline operations. Without monitoring, how would a data center manager know where to invest in energy efficiency technologies, establish baselines, or measure results?

How Easy Is It to Deploy Data Center Monitoring Today?

Data center monitoring can be easily deployed today, accounting for all of a company’s geographically distributed sites, leveraging existing instrumentation that ships in newly purchased IT gear, and supplementing with wireless sensor systems to fill any gaps.

Today, integrated data center monitoring across IT gear and facilities equipment is not only possible but practical and straightforward to deploy for any organization that chooses to do so.

Frequently Asked Questions

Why is data center monitoring historically difficult to deploy?

Two primary factors have made deployment challenging: data center design has often been treated as an art rather than a science, leading to inconsistent monitoring investment, and facilities equipment vendors have maintained proprietary, poorly documented management protocols that resist integration with standard IT monitoring tools.

What is vendor lock-in in data center facilities equipment?

Vendor lock-in occurs when facilities equipment manufacturers use proprietary management interfaces and protocols, forcing customers to use only that vendor’s monitoring tools. This makes it extremely difficult to create unified visibility across multi-vendor environments common in most data centers.

How has data center monitoring changed in recent years?

Modern DCIM platforms can now integrate data from virtually any IT and facilities device, leveraging existing instrumentation built into recently purchased equipment and supplementing with wireless sensor systems. Integrated monitoring across geographically distributed sites is now both practical and cost-effective.

Why is integrated data center monitoring important for energy efficiency?

Without continuous measurement baselines, data center managers cannot identify where to invest in energy efficiency improvements or verify whether those investments succeeded. With energy costs over a three-year period now surpassing initial equipment acquisition costs, strategic monitoring has become essential for managing operational expenses.

Can DCIM monitor both IT gear and facilities equipment?

Yes. Modern DCIM solutions like Modius OpenData can simultaneously monitor IT equipment (servers, switches, routers) and facilities infrastructure (PDUs, UPS systems, CRAC units, generators) from a single unified platform, bridging the traditional gap between IT and facilities operations.